API Price Comparison Table (2026-04-27)

A clear comparison table for the API prices (updated 2026-04-27)

Short Context

| Model | Input ($ / 1M tokens) | Cached Input ($ / 1M tokens) | Output ($ / 1M tokens) |

|---|---|---|---|

| GPT-5.5 | 5.00 | 0.50 | 30.00 |

| GPT-5.5-pro | 30.00 | – | 180.00 |

| GPT-5.4 | 2.50 | 0.25 | 15.00 |

| GPT-5.4-mini | 0.75 | 0.075 | 4.50 |

| GPT-5.4-nano | 0.20 | 0.02 | 1.25 |

| GPT-5.4-pro | 30.00 | – | 180.00 |

| DeepSeek-V4-Flash | 0.14 | 0.0028 | 0.28 |

| DeepSeek-V4-Pro | 0.435† | 0.003625† | 0.870† |

| MiMo-V2-Pro | 1.00 | 0.20 | 3.00 |

| MiMo-V2-Flash | 0.10 | 0.01 | 0.30 |

| kimi-k2-0905-preview | 0.60 | 0.15 | 2.50 |

Long Context

| Model | Input ($ / 1M tokens) | Cached Input ($ / 1M tokens) | Output ($ / 1M tokens) |

|---|---|---|---|

| GPT-5.5 | 10.00 | 1.00 | 45.00 |

| GPT-5.5-pro | 60.00 | – | 270.00 |

| GPT-5.4 | 5.00 | 0.50 | 22.50 |

| MiMo-V2-Pro | 2.00 | 0.40 | 6.00 |

Notes:

-

All prices are US dollars per million tokens (overseas pricing).

-

† DeepSeek-V4-Pro prices shown are limited-time 75% off (original: Input $1.74 / Cached $0.0145 / Output $3.48).

-

DeepSeek-V4 models support 1M context length and up to 384K max output. Both Flash and Pro support JSON output, tool calls, and chat prefix completion. FIM completion is available in non-thinking mode only.

-

MiMo-V2-Pro supports 1M context (≤256K at short context price, >256K at long context price), 128K max output. MiMo-V2-Flash supports 256K context, 64K max output. Both support deep thinking, tool calls, structured output, and web search. Cache write is currently free.

-

The above data are taken from the official pricing resources specified:

- OpenAI API Pricing (GPT-5 family)

- Deepseek Pricing

- Moonshot Kimi Pricing

Update History

- 2026-04-27 — Added GPT-5.5, GPT-5.4 family; DeepSeek-V4-Flash/Pro; MiMo-V2-Pro/Flash

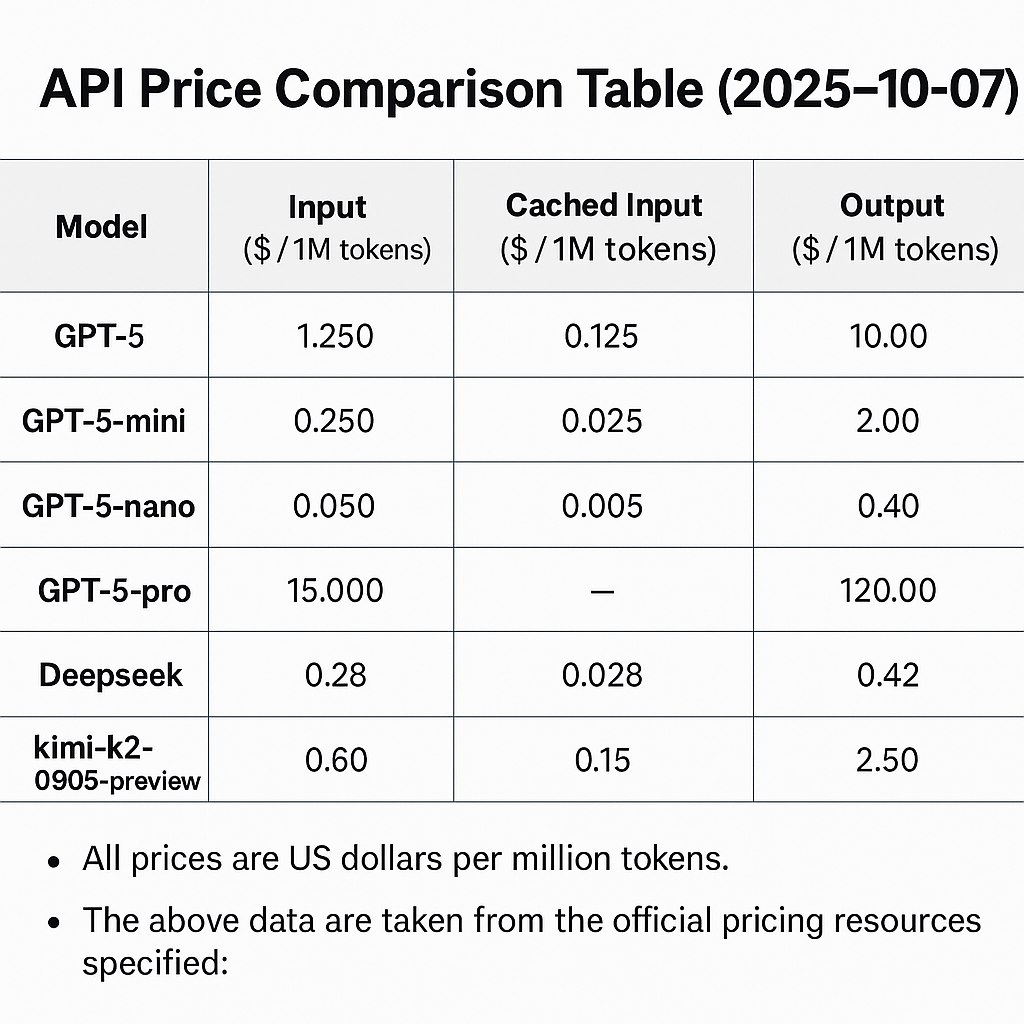

- 2025-10-07 — Initial post: GPT-5 family, Deepseek, Kimi pricing